For the guys of Singularity‘s audio team was not enough their great work on the dev diary blog, where they shared a lot of interesting stuff. They agreed to the idea of giving an interview for Designing Sound, so I asked several questions about the different aspects of the game, which has a really great sound work.

Thanks goes to all the audio tean for taking the time to develop such complete and detailed answers.

MK = Mark Killborn, Audio Lead

KS = Kevin Schilder, Lead Sound Designer

JS = Jer Sypult, Sound Designer

DB = Darren Blondin, Sound Designer

AB = Andy Bayless, Sound Designer

Designing Sound: How and when you guys started to think about the sound of the game? How all artist and general concept influenced you from this early stage?

KS: The sound design began to develop during the prototype phase along with everything else. There was a strong artistic and design influence derived from retro sci-fi movies like Forbidden Planet. We wanted to find a way to incorporate and infuse the mood and atmosphere we loved in those old movies. The sound of the instrument, the Theremin was classic for creating that spooky, creepy feeling. Anyone who has seen those old sci-fi movies will relate to that sound and some great nostalgic memories.

This was a strong influence for the general ambience and soundscape we wanted to create. It worked well to accompany the slower pace and suspense of the initial game design. As the design developed to include stronger action and combat elements, we followed suit with our audio design. We sought to especially give powerful and satisfying impact to our weapons so they would hold their own with other top first-person shooters. The game finally ended up being a blend of those two things: creepy atmosphere and satisfying, intense action.

JS: When I was put on Singularity, the game was already in an early prototype form. The game mechanics and visuals were in an iterative process, which allowed an ebb and flow of the sound design.

I jokingly consider myself to be a backward sound designer. Most of my experience and knowledge is the result of reverse-engineering and trial-and-error in regards to audio and game development. As I continued working on the early prototypes of Singularity I found myself balancing traditional sound design needs with learning every aspect of the game engine. This ultimately led me to incorporate sound design into the game engine for a growing number sounds. I became obsessed with granular sound design and was always striving to increase the amount of variation on common sounds. I experimented with interesting solutions for things like player/character footsteps, foley and physics. I learned that we could drive sound playback based on dynamic parameters in the game, like the delay between a heel-to-toe footstep sound in a rate-scaled animation, or the speed of an object or character and their respective foley movement sounds.

Later on in the project it really hit me in a phone conversation when (composer) Charlie Clouser stated that the game players are essentially the sound designers of the game. Although I had been experimenting with responsive sounds in the game prior to that point I simply hadn’t thought about game audio like that before. Ever since then I have incorporated that thinking into everything I do.

So for me, the early days of Singularity were a mixture of traditional sound design with a heavy dose of technical concepts and experimentation.

DS: In the dev diary blog, Darren Blondin talked about how the team worked to create a unique sonic experience by inventing and also experimenting with all kind of things. Did you have a special source of inspiration? Any special workflow or techniques on the creative and experimental processes?

DB: I don’t like to follow the most obvious path, particularly when designing sci-fi themed sounds for a game with as much possibility as Singularity. For example, when making sound for a futuristic rocket, going into a sound library and entering the word “rocket” into the search field isn’t a good enough starting point. Instead, just having a clear impression about the desired sound, such as the texture of it, points me in some interesting directions to go in. I might end up grabbing the sound of a rolling skateboard and a crying baby for a flying rocket if there seems to be a connection between those elements and the envisioned rocket sound. Sometimes I’ll grab some really weird stuff without forethought just to get the ideas going but the best work results from having a sense for what the sound should be. Forethought helps illuminate more interesting and original creative directions.

There are many more possibilities for a new sound than what will actually work well. Likewise, the creative paths to choose are many, but most of them, even the insanely fun ones that seem brilliant at first, may not lead to the most amazing results in the context of the game environment. When beginning to design a sound, like deciding what types of recordings or instruments to use and what techniques or signal processes to shape them with, that process is completely unconstrained. The sound designer is completely at liberty to do what they want. When hooking these newly created sounds up in the game, some technical limitations start to influence the results. For example, I may have designed a nice collection of weapon sound effects, but the sound set is too large. Without having a creative vision, and planning for potential snags, the quality of the work can deteriorate during the creative process. The work can slow down or go in circles.

To help get a feel for creative possibilities, I like to hear sounds mixed into the game when designing them. Sounds have perceptual identities with respect to volume, frequency, texture and shape. And when sounds are mixed together or playing in series, these identities can change. For instance, you might think a certain sound is big, until you hear one immediately after it that is even bigger, then, magically, the sound that was once the big one is drained of it’s energy and becomes small. If a new sound is going to be interacting with other sounds, it’s helpful to hear them together while designing it. Paying attention to these interactions can help one to make sounds that fit the game more perfectly. Sometimes I’ll just grab an audio recording of the area in the game a new sound appears in and load that into an extra track in my audio editor so I can turn it on occasionally to get a sense for how the sound fits.

DS: How much field recording was needed? What kind of sounds you guys recorded for making the sonic world of Singularity?

MK: I think audio people in games will always say they wish they’d had more time for field recording. Game production schedules rarely allow for enough and the story is no different here. We did record some interesting source material though. One day we recorded some interesting sounds from one of our team members. He has a knack for generating the most obnoxious belches on command. Some of this content was used in the creation of our creature sounds, some of it remains on my hard drive as blackmail/embarrassment material for the future.

KS: Creature voices are always a great opportunity to develop something new and interesting. Since they are based on humans in some way, it makes sense to use human voice to lend real emotion and inflection to the final product. I recall pretty much destroying my voice once while recording a number of growls, snarls and strangled vocalizations. It’s not the kind of thing you can just find in a sound effects library. Time, space and location sometimes limits our use of Foley and field recording, but we love to do it whenever we can.

DS: A very interesting feature is the TMD and everything it can do. From element moving/morphing to energy takes and new powers/weapons. How you guys dealt with TMD and everything it affect?

MK: We tried to keep the sound of the TMD consistent throughout its various functions. There are consistent elements mixed into everything, including the sounds of the objects themselves being aged or reverted. Think of it as the sound of E99, infused in everything. We wanted the device to have a unique sonic identity and so it was critical to have this consistent approach.

The sound of it went through many iterations with various team members contributing. At least three of us touched the thing at various stages of the project.

JS: Early in production the TMD had separate age and revert functionality, so the player could control what age state they liked. With that additional functionality and control early on, I spent a bit of time experimenting with how to make the age states of objects sound satisfying to the player. The final technique I settled went something like this.

First I designed the object aging sound, so that it sounded like things were deteriorating in phases, similar to past, present and future. I would generate a small pool of variations of these sounds.

For the opposite direction, I started by reversing those aging sound elements and trimming off any excess “tail” so that the animated transition time ended at the crescendo of the reversal. Once I was happy with the overall envelope and timing, I would then slowly comb through each reversed and trimmed variation, re-reversing short granules in time to the reversed envelope.

These granular slivers were now playing in their original direction, but the arrangement of the grains were in a reversed order. The result gives the reversion sounds a unnatural-yet-natural sound about them. Instead of merely sounding reversed, they “felt” reversed, but didn’t sound like they were playing in reverse.

Lastly, I would sweeten up these sounds with some residual elements that felt like things were “gluing” back together, ending with a subtle, settling sound to keep it less abrupt.

Even though the TMD functionality changed before we shipped Singularity some objects still switch age states, so the sounds remained in the game. The last batch of “ageables” were fully animated and had a more linear approach, consisting of a mixture of linear sounds for the animation as well as randomly-played short, key-framed, sounds for things like metal squeaks or dust coming together or settling.

DS: Darren also talked about the general environments and also giving different examples of them. On this kind of places, the ambiences are very expressive and unique. What you guys proposed to achieve and how these dark and creepy backgrounds of Katorga-12 were created?

DB: When the player navigates through a ship to get a key plot element, that area gets very alive and interactive. It required so much sound just to support what the designers and artists were putting in to portray the rapid aging and deterioration of a huge ship that was just raised from the ocean floor; it was a feat just to get it all working smoothly. I’m not so sure that the pace of the game allows one to stop and take in all the sonic details going on during that event. When a wall rusts, you can hear the decay moving across the wall’s surface and these little flecks of metal falling on the floor right underneath. Every single burst of water that starts to squirt from a rivet hole, and every gushing pipe and rivulet of water has a sound attached to it that triggers at just the right moment. Being surrounded by all these little details is quite rich to the ears and helps paint a striking reality.

Detail and realism was one of the main initiatives we embarked on with environmental sounds. Although it would have saved a lot of time, it was never just a matter of dropping in ambience that was attached to the players head like a pair of headphones or scattering it at random. When you walk through the rain in the village at the beginning of the game it sounds a little different everywhere you walk and every direction you face and it makes sense too. For example, if you’re facing a wall, you’ll hear the rain coming mostly from behind you. We didn’t want to place sound sources at random; the position of environmental sounds was carefully considered so that the environments felt more realistic.

AB: A lot of detailed thought went into creating the living environment you hear in Singularity. Every single sound on Katorga-12 was purposefully hand-placed as a narrative device to help tell the story of the island. One idea we worked with for much of the game’s development was the concept of the island being populated with all manner of beasts and creatures. Where do these creatures come from? How do they survive on the island? What will they do to an unwelcome stranger? The answers to all of these questions can be told with stories in the audio. The game’s various sewer tunnels are an example. As you wander through them you’ll witness the ways these mutant creatures have altered the environment to suit their own natural habitat. Clutches of eggs and walls dripping with slime certainly look interesting, but with the help of the audio we can paint a clearer picture of what these things mean in the context of the game. You’ll hear the hungry squeal of newborn Phase Tick larvae near the eggs, and suddenly it makes more sense as to why these creatures are rushing at you. The Ticks quickly go from being mindless monsters that attack the player because that’s what video game enemies do, to animals defending their young in a dangerous territory the player is destined to navigate. To summarize, we have a story behind the action instead of just a simple game mechanic.

MK: We spent a lot of time with ambience, as Andy and Darren have suggested. Each of us was responsible for a section of the game and we regularly reviewed each others’ work to ensure our work was coming together creatively. Over the years the audio team developed a large palette of ambient sounds to use in various areas, and we reused some of them to keep the feel consistent throughout the game, but we also used a lot of unique stuff. We always wanted new areas to sound fresh.

One of the great things about the Unreal engine is that we could quickly drop new sounds in and test them. It’s important for this process to be as fast as possible so you can iterate rapidly, and we had it moving along at a pretty incredible pace by the end of the project. We would have the game’s editor open on one screen, our sound tools on the other, and we’d just generate WAVs, drag and drop them into the world and see how they fit with the rest of the environment. From finished WAV file to in-game and running took less than 60 seconds.

DS: There a lot of different weapons based on real creations, also new weapons, explosions and all kind of destruction. What did you guys wanted to offer on this aspect?

JS: The weapons and explosions are some of the most complicated sound events coming out of Singularity.

The traditional weapon sounds were designed from original field recordings provided to us by Activision. Earlier versions of the weapon and explosion sounds were mono, but we ended up switching them out with stereo assets to make them sound bigger and wider. To accentuate the stereo field we used distinct sounds for each channel that were similar enough to sound natural, but different enough to provide a great, widened stereo field.Explosions typically have two layered assets (close and distant), whereas weapons have a minimum of three layers that consist of the main firing sound, an exterior reverberant late-reflection sound and finally a long-distance sound.

The stereo weapon firing sounds use mixing that calculates the distance and collapses the sound to mono dynamically at the furthest point. In surround sound configurations, the stereo channels are mapped to the quadraphonic speakers based off its direction. This means that the sound plays normally when the sound is in front of the player, but the output channels will change if fired from the sides or behind the player. When fired from the left side, the left channel of the sound is played in the surround left speaker, whereas the right channel is played out of the front left, giving you a stereo sound from the side.

The exterior late-reflection element is a quadraphonic sound that is dynamically toggled on and off via our audio occlusion system, as it determines when a sound is played in an interior or exterior location. In a surround sound configuration, this really helps make the weapon sounds feel like they are bouncing off of the distant environment surrounding the player.

The long-distance element fades in as you move away from the sound source and has a low-pass filter applied the further away you move from it.

The final aspect of the weapons and explosions is how they use our dynamic mixing system. Each weapon and explosion sound applies ducking that has a hand-crafted envelope over time to suit the envelope of the sound. The distance and amplitude of the weapon or explosion dictates the amount of ducking to do, so sounds played further away will not duck the mix. The end result allows all weapons and explosions to cut through the mix up close without clipping, and to subtly duck things out when at medium to long ranges.

The best part is that all of these elements exist in both single player and multiplayer, giving the player valuable feedback in a natural way that does not need to be taught.

DS: In addition to the traditional soldier class in multiplayer, players can also play as different creatures such as the Zek and Phase Tick, each one with different skills. How did you make every character feel different in regards to the sound design?

KS: The creatures in the game were very unique and different from each other. Their body shapes, size, movements and attack powers were different enough in design to give us an advantage in clarifying their unique sound designs. I generally like to just let a creature sort of “speak to me” about how it will sound. Playing a creature before it has sound, watching it move, run, jump, attack, die, etc. helps to get a sense of the voice and movement sounds that need to fill in the blanks. The Tick was easy to set apart because he was the only real “bug”. He was small and fast and sneaky, which influenced his sound design. In contrast, the Zeks were somewhat humanoid and shared the sound qualities of humans with the addition of more brutish attacks and the phasing abilities. I think the most important guide is to give each creature a sound design that suits him well and is fun to hear when you play.

JS: As Kevin said, the creatures each have a unique vocal sound about them. Later in the project we wanted to expand upon simple vocal sounds with how the players control these characters, so I worked on the various movement sounds to animation. There are a few layers to these sounds, such as the footsteps, foley movement and vocalizations.

For the footsteps, we had some unique “foot types” with the characters. The Zek and Revert have bare feet, the Phase Tick has small, pointy spider-like legs and the Radion has large creature feet. Around the time I was working on the playable creatures I was also starting to learn programming, so I whipped up some trickery to reuse the regular player/soldier footstep sounds for the creatures. Since the soldiers had material-specific combat boot footstep sounds we couldn’t simply use their sounds for the creatures. What we ended up doing is developing a method to fade layered sounds at the start of playback. With this functionality in place, we could then play a general bare-foot, Tick-foot, or Radion-foot sound that was not material specific and fade in the material-specific combat boot footstep sounds to eliminate the boot-like quality — leaving only the material resonating aspects intact. This worked out great, especially in coordination with the way we dynamically adjust the pitch and volume of the footsteps based off of the character speed.

The foley and vocalization sounds fill in the gaps quite a bit, as the Revert slurps and moans all the time and the Zek is usually huffing and puffing away while moving. The Phase Tick footstep sounds without any foley or vocalization accentuate the quiet, spider-like quality. The large footsteps of the Radion make it sound like there’s always movement going on through the body and exoskeleton.

AB: When designing a sound for a game I always consider two components of audio feedback: 1.What does the player need to hear so they can functionally play the game? 2.What does the player want to hear that makes the game seem more fun? Sometimes you can combine the two concepts, and an example is the Echo Zek creature who appears in the single-player portion of Singularity. This particular enemy is invisible most of the time, but it does reveal itself from time to time. In that instance we wanted to convince the player that the creature is vulnerable, and should be engaged in combat. We used audio to help do this by giving the creature a very specific sound at the moment it reveals itself. We made a layered creature hiss sound from various animal vocalizations, and broke it up with a staccato snipper effect. The end result was what appeared to be a cobra hiss combined with a rattlesnake’s rattle. What we did was take advantage of the fact that most people can recognize both of these sounds, and also understand what they mean. In each situation they indicate the animal feels threatened. Even if you may not be consciously aware of it while playing the game, you might indirectly recognize and understand this creature sound as such, and proceed in combat knowing the enemy is open to attack. It’s a cool sound effect that doubles as an effective gameplay device.

DS: What were your favorite tools and techniques for sound design on this project? Any specific process or tool you find very useful?

DB: That was one of the first questions I asked when I first met the other sound designers here at Raven and I was surprised to discover that we all use completely different audio tools. Maybe for that reason, the creative approach for generating the sounds is not always discussed, particularly at the beginning stage. Occasionally, ideas are bounced around beforehand as to general techniques but when the work starts, studio doors are shut and people get to work privately from their own creative angles. When new work appears in our games, then it enters the collective focus and is open for critique. It surprises me sometimes what another sound designer might do. If someone is working on sounds for a new event, or weapon, or creature, I usually don’t know what their vision is until I hear the ideas surface in game. That’s actually a more compelling way to collaborate than if we all used the same tools and techniques and talked about the work a lot in advance. The creative differences we have add a bit of friction and variety to the results.

Ableton Live was my main weapon of choice for Singularity. Live is a digital audio workstation (DAW) geared towards improvising and controlling music in a live performance setting. I began using it around 2002 as means to create music by time stretching loops. I was excited by how easy it was to warp sounds and my changes were so fluid, as if I was sculpting audio waveforms out of clay. I got hooked on its capabilities and learned to rely on it for audio editing. I particularly like that it allows sounds to be manipulated in real time, without having to stop sound playback. Also, all parameter changes can be recorded and played back which makes it expressive. Live never got in my way while working on the sounds for Singularity. It formed a straight connection between my ideas and creative output.

This may be unexciting but I would say the signal processing tool I used the most on Singularity is a parametric equalizer. A simple tool like an EQ allows one to sculpt sound in a way that feels and sounds natural. I generally steer away from plug-ins aimed at doing identifiable effects, like flanging, phasing, chorus and that sort of thing. If I’m going to create an effect I want the signal process to become one with the sound, not be something that coats the top or sounds prepackaged. For Singularity, that was especially important because the world is so organic and unexplainable. I don’t like when I’m hearing a sound go through a particular type of process. That pulls me out of the immersion. I guess it’s like when you see a computer generated character on the screen and it’s so fake that you’re having trouble imagining that it’s real. Although it’s not as easy for the average listener to point out, the same thing can happen with sound.

MK: As Darren mentioned, we each use our preferred tools. I’ve been a Steinberg guy for years, so Nuendo with the Waves Platinum bundle is my weapon of choice. On Singularity, however, I did very little content creation. Most of my work was in implementation and mixing of content, so the tool that had most of my time was our customized Unreal audio toolset. I spent a lot of time toying with attenuation curves, real-time EQ and dynamic mixing. I still dream about editing .ini files.

JS: In terms of tools, I heavily rely on Adobe Audition for all my sound design work. I have been using it since the “Cool Edit” days (originally in Windows 3.1!), back when it was developed by Syntrillium Software. I also use Apple’s Logic Studio for some of the more conceptual and creative aspects of sound design. I designed the multiplayer Beacon voice using Logic’s pitch correction and modulating effects. Beyond that, I really enjoy exploiting the game engine features for sound design use, especially when there is a player response or cause & effect involved in the playback.

In terms of techniques, I relied a lot upon granulating my sound design assests to increase randomized variation, stitching grains together, and randomly firing off one-shot sounds in the environment.

I’m not a fan of looping sounds, especially when there is noticeable repetition. To combat this problem I typically layered constant, tonal ambient sounds and randomly triggered one-shot sounds like gusts, moans and whistles with varying degrees of volume and pitch modulation. This always results in a constantly fresh environment. A rather obsessive example of this are the cricket sounds in various parts of the game. As you move away from the docks at the start of Singularity there are some crickets chirping on your way to the “Welcome Center” building. All of those different cricket sounds are multiple tiny, one-shot cricket chirps that are “physically modeled” to play, delay, and vary in pitch and volume like real crickets do.

DS: What kind of tools and systems did you used for audio implementation and how did you guys approach the mixing of the game?

MK: We used a heavily modified version of Unreal Engine 3 for Singularity, and a lot of tweaks were made to the audio code. For example, we built a dynamic data-driven mixing system that operates in layers, each layer taking priority over another. The game is aware of which sounds matter and which don’t at any given moment, and it makes intelligent decisions based on that. If a character is talking and the player fires a shotgun, the engine will drop the character’s voice and the world ambiance to make way for the gunshot. If there’s zero action, the engine will bring up the environmental ambiance to compensate. The goal is to create an interesting and compelling mix despite unpredictable player input rather than let the sounds play statically and turn into mush if the in-game conditions become too chaotic.

We also used our internal VO and localization database system. The writer can submit his script to the system and it automatically generates all in-game assets for us. Then scripters and programmers can hook directly into them. This system used flags in the script to determine the importance of the VO line and who was saying it, and used this to assign it certain mix variables that the dynamic mixing system used when mixing in real-time. It’s not a sexy feature, but it saved us a tremendous amount of time and effort.

We’re big on data-driven systems and automation. On the surface it may seem like we focus too much on tools, but the reality is we like to invest in our tools so they’re transparent and save us time. We’re always looking for ways to automate repetitive, time-consuming tasks. That allows us to focus on the creative component of the process.

JS: To expand upon what Mark said, I’ll detail some of the additional technology and tools we developed for Singularity.

Some of the low level mixing had to be radically expanded upon and rewritten. We added a naturally sounding, realistic fall-off curve for attenuating our sounds in 3D space. We added Center/LFE channel and Effects send capability to each sound event in the game as well as wrote our own 3D spatialization code. The custom spatialization defaults to a quadraphonic panning matrix and allows us to “position” two-channel and four-channel sounds in 3D space by panning the input speaker matrix from the asset to the output speaker matrix. This means that a stereo sound playing in front of the camera will map properly to the front left and right speakers. However, if that stereo sound is positioned to the left of the camera in a surround sound configuration the channels “rotate”, so the sound effect “left” channel then plays out of surround left speaker and the sound effect “right” channel plays out of the front left speaker.

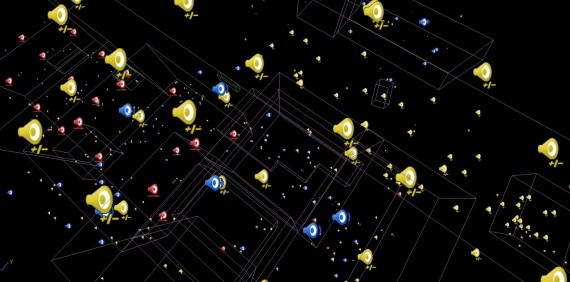

We also developed in-game volume metering and mix state debugging heads up displays. We redesigned many of the editor front-end tools to streamline our workflow. A huge benefit of doing this was eliminating the default numbering of audio data, as volume, pitch and filtering was edited with a zero-to-one value. The front-end was reprogrammed to use Decibels, Semi-tones and Frequency values.

DS: And what about the music direction? What were you looking for and what did you do to achieve it?

KS: The music design for Singularity also began under the influence of the retro sci-fi movie feel I discussed in the first question. The creepy Theremin sound in Forbidden Planet was classic in creating both the music soundtrack and sound effects. We wanted to try and keep that same flavor while bringing it into the 21st century. Jer developed some music examples internally that we used to establish the style. We eventually sought out a composer who demonstrated work that was similar to this genre while still giving it a “modern” power and higher production level. We were fortunate to have Charlie Clouser create music for the game. He was able understand the style and mood we were trying to emulate and recreate it while putting his own spin on it. The primary character of the mood in the game comes from the creepy, moody atmosphere of the music.

Excellent, I’ve heard good things about this one. Looking forward to playing it.