For this month’s theme of “Research”, Dr. Susan Rogers was kind enough to answer our questions about her work and research in music cognition and psychoacoustics. Susan Rogers holds a doctorate in cognitive psychology from McGill University (2010). Prior to her science career, Susan was a multiplatinum-earning record producer, recording engineer, mixer and audio technician. She is currently an Associate Professor at Berklee College of Music, Boston, teaching music cognition, psychoacoustics, and record production. She is the director of the Berklee Music Perception & Cognition Laboratory where she studies auditory processing in musicians.

Designing Sound: What drew you towards the subject of psychoacoustics and music cognition?

Dr. Susan Rogers: I have an engineer’s mind. I like understanding mechanisms and processes. I also have a scientist’s mind because I am curious about natural phenomena. Auditory science and brain science attract similar kinds of thinkers — those who are ok with imagining the mechanism and process. We typically don’t view air pressure variations, electrons or nerve spikes in action; we must often infer the process from the resulting behavior or event. Short answer is that it’s just fun.

DS: Is it an area that is often overlooked by the scientific community?

SR: My doctoral advisor Daniel Levitin reminds us that humans are visual creatures first and foremost. The sense of sight is how humans navigate the world, including informative activities like reading and watching television. So WAY more research time and money has been spent on mapping the visual modality. The growth of psychoacoustic research in the ‘70s and music cognition in the ‘90s has helped auditory science to catch up.

DS: Whether we’re professionals in music or sound, we all stand on the shoulders of giants; artists, crafters and technicians who’ve defined the mediums, the language of auditory art and the tools that we use today. What are some examples of individuals or landmark studies that paved the way for the research work you’re doing today?

SR: In psychoacoustics, it would include the studies of the inner ear by Noble laureate Georg von Bekesy, consonance/dissonance work by Hermann von Helmholtz, Ernst Terhardt, R. Plomp and W.J.M. Levelt, and cochlear tuning work by Donald Greenwood and Brian C. J. Moore. In music cognition we have tonality work by Carol Krumhansl and Roger Shephard, performance work by Caroline Palmer, timbre perception by Stephen McAdams, the influence of musical training by Gottfried Schlaug, music and language by Ani Patel, and development work by Sandra Trehub and Laurel Trainor. In auditory neuroscience we have the great Charles Liberman and Sharon Kujawa, and the equally great Nina Kraus.

DS: With the resurgence of Virtual Reality, there’s been a lot of work in and development of new tools to create immersive audio that draws from research on how the brain interprets sound. Do you ever expect the work you do to extend beyond academics, that it will perhaps go on to influence a craft or tools in a direct way?

SR: Some researchers get frustrated with the grant writing process and having to spend so much time teaching that they succumb to the “pull of the weasel” and join commercial research endeavors. I know one who would like to help build better a music search engine and who looks for opportunities to be paid to do so. I am like most researchers in that I enjoy the pure motive of intellectual curiosity. I want to contribute to what is known of the natural world, and I don’t want pressure to find a pre-determined outcome. That sounds a bit cynical. I don’t mean to imply that commercial work is biased; only that it risks bias.

DS: Musicians and sound professionals can typically expect to spend anywhere from a few weeks to a year working on an album, film, game, or installation. What sort of timeframe are you dealing with when you approach a specific topic or hypothesis to explore?

SR: Being a research scientist is like being an explorer. You don’t know how long you will be gone because you don’t know what you’ll find. If you find something unexpected and cool, you toss your map and follow the new trail. Another factor is other researchers’ work. If you’re exploring an unknown and someone publishes a report that answers your question, you must revise or skip it. It’s like making a record; if someone releases an absolute smash hit while you’re in the midst of yours, it can influence your direction. Another obstacle is money and its corollary — your tools. I measure auditory evoked potentials because I can afford the tool and its software. Before I could afford it, I used a laptop to conduct perception work. Like making a record, scientists can only go from the vision to the materials if they can afford the materials. The majority of us work from the materials to the vision.

DS: How has your position at Berklee College of Music, a school with approximately 4000 musicians, influenced, aided, or redirected your work and focus?

SR: It makes sense for me to use what’s at hand in my explorations, so I examine differential auditory processing as a function of musical training and background. Researchers at major universities and colleges compare musicians to non-musicians. Berklee affords me the opportunity to make intra-musician comparisons. Berklee is the (musical) world! We have a nearly 50% international student body and our musicians are trained in all styles, not just classical and jazz.

DS: What has your previous experience and testing shown you about the way musicians operate cognitively compared no those who’ve never received formal music training?

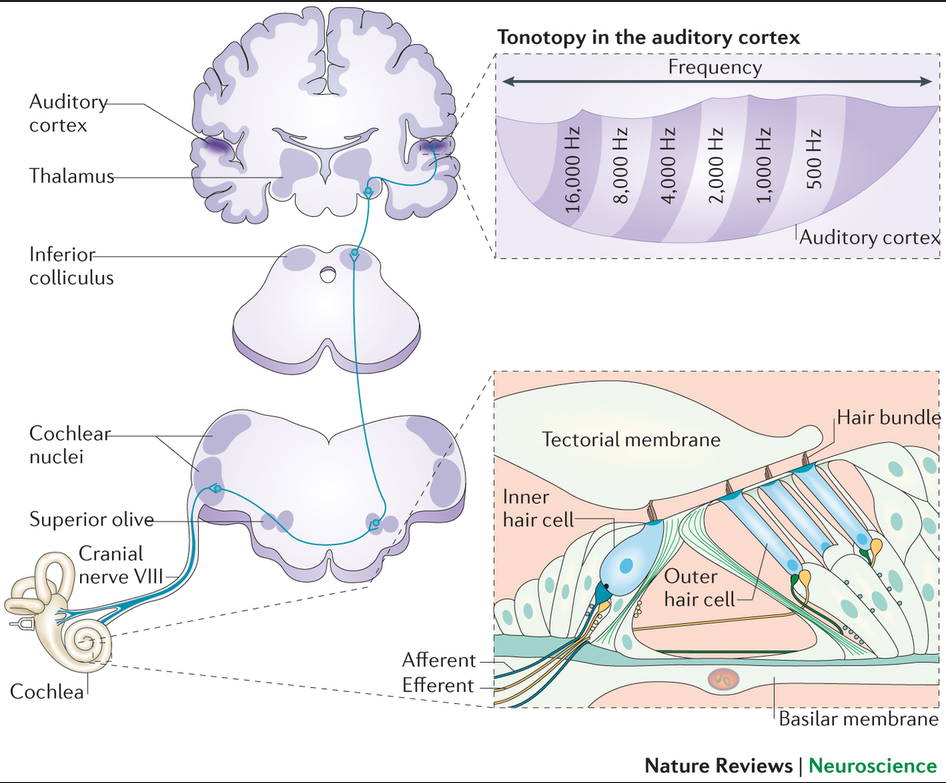

SR: It has been established by Nina Kraus and others that three or more years of musical training in childhood allows musicians to process sound faster and with greater acuity than non-musicians. Young musicians become “auditory athletes” by enhancing nuclei and neurons in the auditory path from inner ear to cortex. This offers cognitive and social advantages such as impulse control, pattern recognition, capacity for delayed gratification, ability to synchronize movements to another’s, enhanced empathy, better verbal memory, and improved reading scores. In old age, musicians are better equipped to interpret accented speech, as well as improved speech-in-noise perception and spatial localization.

DS: What is your current research exploring and do you anticipate any specific findings?

SR: I am looking at the auditory brainstem response of our Berklee musicians with a twofold aim: to see if specific music listening habits develops noticeable differences in the neural “wiring,” and to look for signs of early onset auditory neuropathy. We know that acoustic trauma from repeated high-intensity noise exposure can lead to auditory nerve cell degradation and death. When this happens, we go from having a 24-bit sampler (so to speak) to an 8-bit sampler. To use another analogy, it’s like replacing your 4 AWG speaker cable with 18 AWG cable. You will lose power and signal quality in the high frequencies. I anticipate that our drummers, horn players, and heavy metal guitarists might be at greatest risk for damage, and that our audio engineers and sound designers may show enhanced signal processing acuity from having to recognize and manipulate small sonic distinctions. I’m in the early stages of the work, so I don’t know yet if I’ll have a finding.

DS: Do you feel or have evidence to support that musicians are “wired differently”, that they behave or process information differently even in a non-musical context?

SR: It would be irresponsible of a scientist to say that she “feels” that something is true, so I must cite the peer-reviewed literature. Musicians (defined as those with five or more years of formal musical training starting before age 14) process sound — music, speech, environmental noise — faster and with greater acuity than non-musicians. Musicians process visual information the same as non-musicians. It is only the auditory processing that differs. In a longitudinal study, Gottfried Schlaug explored the “chicken or the egg” question by taking cognitively matched children and randomly assigning half of them to take music lessons. Areas of the brain associated with pitch and rhythm processing were enhanced in the music group two years after onset of training. Brain volume in the cerebellum (which coordinates motor activity) of male musicians is greater than in male non-musicians. Female musicians have greater overall brain volume than female non-musicians.

DS: Dr. Poppy Crum of Dolby recently shared an interesting perspective on sound designers…

[pullquote align=”full” cite=”Dr. Poppy Crum” link=”http://www.mixonline.com/news/facilities/dolby-s-new-san-francisco-hq/427147″ color=”” class=”” size=”14″]“I have huge respect for sound designers…They may not speak in the same vocabulary I speak in, but they have an expert understanding of human perception. The ability to take sounds from many different sources but recognize the experiential representation that someone listening to a film is going to have is at the core of human experience. And they understand that innately.”[/pullquote]

This isn’t so much of a question, but I’d be interested to hear you reflect on her sentiment as it relates to music, musicians, sound and sound designers perhaps compared to how we process visual art.

SR: My dear friends Wendy Melvoin and Lisa Coleman just spent a week at Berklee. They spoke of their work as television and film composers. They use intuitive knowledge to cue viewers in on the subtext of visual scenes. I can’t remember the name of the Italian sculptor who first said it, but he said that every work of art is “on its way to becoming something else.” The sonic canvas of films, television, and games gives us the sense of what the art means and what it is becoming moment-to-moment. Scoring probably evolved when ancient story tellers needed an accompanist to enhance a story. If he was describing danger, maybe it called for a low rumble like a stampede or thunder would make. It could also be the quiet sound of a breathing animal or a slithering snake (think of how often we use maracas — which sound like rattlesnakes — in scores). If he was describing happiness, maybe it called for a high-pitched fast melody like birds or laughing children make. Loneliness might be the sound of wind on a barren plain. It makes sense that humans have evolved to associate certain timbres, frequency ranges, rhythms, intensities, and intervals with environmental sounds and that this formed the foundation of our music systems.

As for how we process visual art, I’m sorry to not know much about it. I do know that people in collective societies like Japan and China tend to view the whole object first, then the details, while that is reversed for those in individualistic societies, like most of the West.

A big thank you to Dr. Susan Rogers for taking the time to answer our questions. You can learn more about music cognition through the Society for Music Perception and Cognition (SMPC).

Dr. Rogers herself teaches an online course on Music Cognition which you can find at the following link: OLSOC 307 Music Cognition through Berklee Online

re: the comment ~ ” Musicians (defined as those with five or more years of formal musical training starting before age 14) process sound — music, speech, environmental noise — faster and with greater acuity than non-musicians. Musicians process visual information the same as non-musicians. It is only the auditory processing that differs. ” — the question i have is how a musician defined? i’ve long felt everyone is a musician but not everyone accepts that identity because of cultural / social / etc. pressures.

my comment should have been edited to state: why is a musician defined as those with five or more years of formal musical training starting before age 14? its a false paradigm and negates many professional / cultural musicians and music makers.

Glenn, I should have made a stronger qualifying statement on the definition of the word “musician” in psychology. Practically speaking, a musician is someone who plays or sings. Scientifically speaking, a “musician’s brain” is one that shows processing circuitry — actual physical structures — that are distinct from a non-musician’s. There are behavioral differences (i.e., auditory perception and cognition) between persons who have had lots of musical training and practice, and those who haven’t. These changes are along a continuum, of course, so we draw the line between “musician” and “non-musician” at the point that includes the vast majority in each sample. That is currently around 5 years of musical training. Folks with 2 or 3 years of musical training show physical and psychological profiles somewhere in between. A scientific definition of “musician” is useful in science, but it doesn’t apply in art.

i understand this distinction for practical scientific purposes but i find myself wondering if this sort of limited study group perpetuates false paradigms of what constitutes formal musical training and serves to support cultural musical colonialism and biases. formal musical training can involve listening to nature and transforming that understanding of the sonic world into sound. this can be an act passed down via listening traditions or other practices. all children are musical in their early years, but at a point in their development some continue to consider themselves musical while others accept they are non-musical. my informal observation is these choices are enforced by the formalized and institutionalized music and art practices. i’d be curious about child physical and psychological profiles up till the point where the musician identity changes– perhaps age 0-5. active listening is musical composition. if you accept that all sound is music but it is the biases of culture, identity and so on that declare sound/performance un-musical then the idea of formal musical training vis academic / scientific definitions are anemic. so i would suggest that perhaps it is not formal musical training that affects processing circuitry but rather the listening and expression practice in general and can be found in other populations and practices regardless of the formal musical training component.

You (glenn) seem to be suggesting that musical training could be *listening* differently, not just becoming more educated through formal schooling to make sound, like how we think of music so far.

Thank you, Dr. Rogers for this great interview/article. I’m delighted to hear about the benefits of music lessons for those under 14 (and I’ll be sharing that with the parents of younger children I know). Has there been any similar research on the benefits to adults who take up playing later in life?

I am beginning to look at this within an MFA program (nearing the degree soon). I do research into conceptual compositions & work with a/v; I’ve spent my life as a professional jazz trombonist and now also play/record drone music & noise using a hurdy gurdy (my own is a large, Hungarian one so the tones are low in pitch with a nice resonance at about pedal A give or take). I plan to do a short film next that depicts a few psychoacoustic effects. This start-up work on this line of thinking and I’d like to cite this interview! My short film (due in three weeks) would simply introduce these ideas to other MFA artists who are not musicians. My background: 45 years playing jazz but I did clinical lab work, too, for three years. I wanted to write in and say hello.