Guest article by Andreas Jonsson

DSP beginnings

Last year, I undertook a one-year Masters in Sound for Moving Image at the Glasgow School of Art, which got me properly introduced to the wonderful world of DSP and the seemingly endless possibilities of Max/MSP.

Having dabbled briefly in Pure Data and coming from a background of computer programming, it didn’t take long before it got me completely hooked. The first thing I created was a variation on a sampler keyboard, a sort of homage to my trusty Casio SK-1 and Yamaha VSS-30 keyboards. In doing so, it really opened my eyes to how endless the possibilities could be with DSP and how the only real limitation seems to be ones imagination.

So I started to imagine other possible ideas that I could use for my final project and my mind eventually settled on an idea for an “Ambience Designer”. I’m a big fan of working with ambiences in sound design and the interview with Tim Nielsen a couple years back on Designing Sound nicely articulates the importance of well-crafted backgrounds.

So I started to imagine other possible ideas that I could use for my final project and my mind eventually settled on an idea for an “Ambience Designer”. I’m a big fan of working with ambiences in sound design and the interview with Tim Nielsen a couple years back on Designing Sound nicely articulates the importance of well-crafted backgrounds.

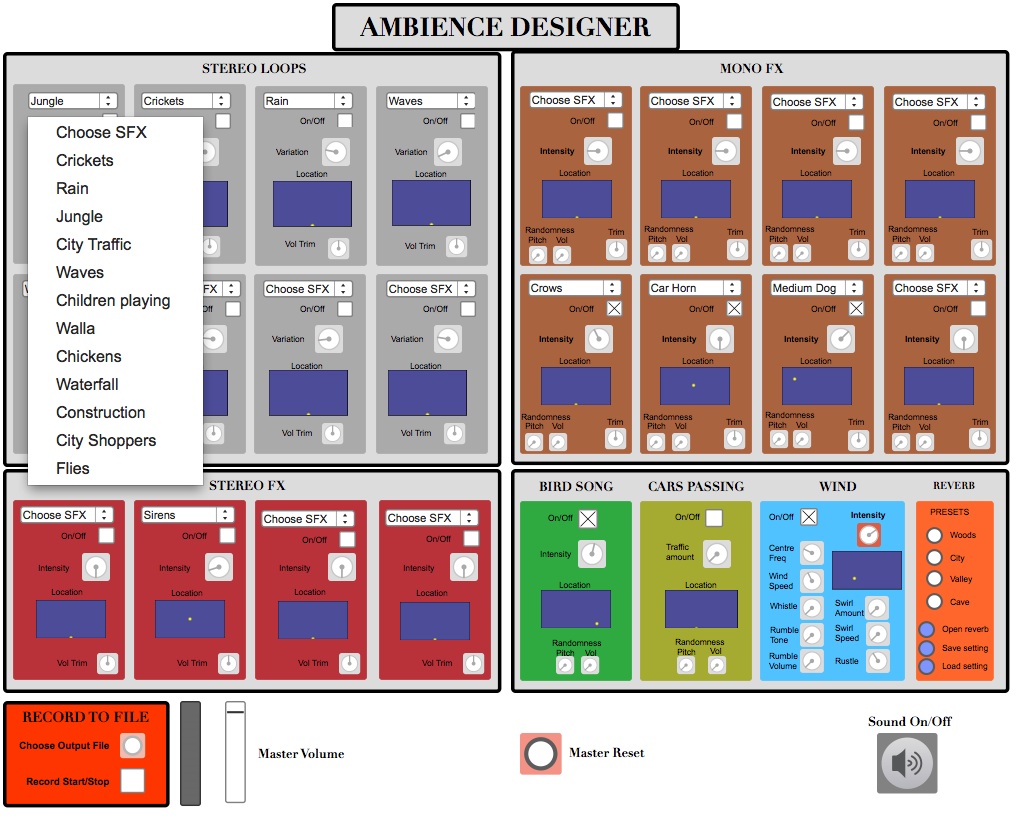

My thinking was – could I create something where you could design ambiences using a modular approach, where common sonic components of ambience creation were made available and could be tweaked using an intuitive UI for stereo placement, distance and intensity?

While I knew that this type of system would never have sufficient flexibility or variation needed to accommodate the subtle nuances of most professional sound design, it seemed a useful tool for hobbyists and had interesting educational potential. And apart from anything else, I liked the challenge of creating it and was curious how it might turn out.

From idea to execution

I began by noting down a list of common sounds that would be included in such an application, such as wind, rain, waves, thunder, traffic, bird song, dog barks, crickets, crows etc. From this list, and began making notes on different approaches these sounds would need in terms of their methods of implementation, depending on the nature of that sound.

I identified three main types of implementation:

Looping stereo layers

These would be sounds that by their very nature would be difficult to break down into component parts due to having a lot of random unpredictable behaviour.

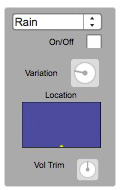

These “STEREO LOOPS” modules consist of continuously looping layers, with multiple stereo sound files of varying intensity (in the case of rain, from light drizzle to heavy downpour) playing continuously. The Variation knob would then cross-fade between these sound files, creating the illusion of a gradually increasing amount of rainfall as the dial is turned.

Granular samples

These are sounds that could be broken down into smaller granular pieces and played back in such a way that a considerable variety could be achieved with the right amount of randomisation and variance. As an example, the sounds of a dog baring could be broken up into individual samples and made into a small library. Much like the common approach for sound design for games, these samples could then be played back at a random interval, with slight variations of speed/pitch and volume to create the variety and avoid repetition.

The MONO and STEREO FX modules use this granular approach. Multiple variations of a sound effect are made into separate files, and the patch would play back a sound file at a controlled but random interval. The Intensity dials would then gradually increase/decrease the random time between samples, giving the illusion of, say, more crows or busier traffic. There are also controls for altering the amount of pitch and volume randomization.

The BIRDS module use the same granular approach to play a set of recordings made by friend and sound artist Hanna Tuulikki, who have taught herself to mimic a number of common bird calls native to these parts – such as the song thrush, wren, chaffinch and various tits.

Procedural synthesis

Procedural audio is an increasingly popular method of creating a virtually infinite variation of sounds through real-time synthesis based on physical modelling of its sound source. It is particularly useful within video game audio due to its ability to be linked to player/game-dependent states within a game engine and allows for a more dynamic interaction than traditional sample based method.

Complex sounds can be difficult to realistically model, but sounds such as wind, rain and water can be synthesised to a high standard with a high level of flexibility and user control. Examples of this can be seen and heard in VST plugins such as AudioGamings Wind and Rain modules, and Audiokinetic Wwise Soundseed add-ons.

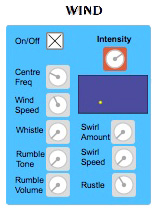

The WIND module is a modified “translation” of a Pure Data patch from the book Designing Sound by Andy Farnell, with further alterations to give user control of parameters such as centre frequency, rumble and whistle amount. It uses a number of white and pink noise generators, processed by a bunch of low and band pass filters. The cut-off/centre frequency of these filters are modulated by a cyclical signal, which itself is modulated by a somewhat chaotic signal derived from yet more white noise generators, which produce the sound of gusts and squalls.

Here is how it ended up looking in the end:

Distance and panning

An important part of ambience editing and mixing is finding the correct sound perspective. This involves placing the recording at the appropriate spatial distance and left/right position that is suitable for the situation.

The property of a sound changes in quite complex ways over distance. The overall volume attenuates by roughly 6dB for every doubling of its distance. There is also a very complex attenuation of high frequencies that is dependent on temperature and humidity of the environment. In addition, there is an increase in sound reflexions and reverberation that would occur as an object moves away from you. Other factors such as wind speed and direction also exist, but I chose to overlook these for the purposes of my implementation.

My goal was to create an intuitive way of placing sounds spatially, without the need to control separate processes in order to achieve the right perspective. I ended up with using the [jsui] object as a X/Y controller in Max, where X pans the sound from left to right and Y moves the sound away from you.

By altering the Y-axis, the sound is simultaneously being processed to attenuate in volume, HF content and increase in reverb amount. In addition, a stereo sound becomes narrower as it moves away from you, adding to the illusion of distance.

Panning also required a considered approach. As my sounds would often be stereo samples (or mono samples spread over a randomised stereo field), I wanted a way to gradually fold the sound towards being mono as the user pans further to the left or right. This was done to always allow for a certain width of the sound, even at extreme left or right positions, meaning the sounds never quite become point source.

Creating the Ambience Table

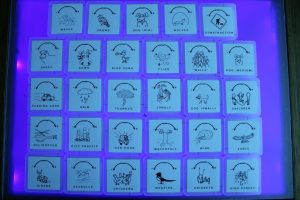

Having previously seen videos of the Reactable, I was keen to create a tactile version of my Ambience Designer using similar technology. Fortunately, the developers behind the Reactable made parts of their technology open source and free for anyone to use, including a multi-touch toolkit called ReacTIVision.

This toolkit has software that can recognise special symbols called “fiducials”. Using a camera connected to a computer, these fiducial can be identified by their individual ID numbers in ReacTIVision and their positional data tracked in X/Y space, as can their rotation. This data can in turn be send to and received by a number of clients, one of which being Max/MSP.

I have designed my patch to receive fiducial tracking data and process the corresponding sounds distance/panning/intensity in accordance with this. Each fiducial ID corresponds in my patch to a specific sound, so when it “sees” the ID1 marker it will trigger, play back and control parameters of “Crickets” whereas ID2 always corresponds to “Rain” etc.

With the aid of a friend skilled in carpentry, we designed and put together a wooden table with a translucent plexiglass tabletop. A camera is then mounted inside to track fiducials placed on the screen, which sends data to Max/MSP. It provides a hand-on way of interacting with sound in an intuitive, musical and playful manner.

Max objects

Whilst it is outwith the scope of this article to go into too much detail on my actual Max patcher, I would like to mention two objects that I used throughout and found invaluable to my implementation: [poly~] and [coll].

[poly~] is an object that deals with instancing and polyphony. As I was dealing with multiple instances of the same modules (8 Stereo Loop modules, 8 Mono FX modules etc), I needed to find a way of creating a module that could be duplicated into several instances. This would give me the flexibility to expand my patch into any number of modules and also meant I didn’t have to duplicate any changes I later made to the patch in several places.

The way [poly~] works is that it encapsulates an entire patcher within the [poly~] object and each time it is called upon it creates a whole new instance of that patcher. [poly~] was also used within the Mono SFX modules to allow for polyphonic playback of the sounds that are triggered at a randomised interval.

The [coll] object is by no means one of the more exciting of objects, but it is a workhorse that can enable some really flexible patchers and save a lot of time and effort when dealing with any information that needs to be somehow stored.

It is an object that can store, edit and recall messages and data onto regular text files on your computer, essentially working as a database. This meant I could quite easily manage data relating to my library of sounds, such as file names, file location, number of files for a certain type, display name etc.

In Conclusion

There are infinite varieties of soundscapes that depend on the biology, geology, weather, season and human activities in an area. In order to create a suitable ambience for the moving image, consideration would also need to be taken in terms of narrative structure, emotional context, audience immersion and sonic envelopment.

Creating a tool that would fulfil these criteria of diversion and dramatic complexity with a simple and intuitive design is always going to be difficult, and for a professional sound designer often limiting. But in doing so, it also opens up a way of interacting with sound on a different level, an instrument of sorts that allows the user to experiment and play with sounds on a modular level. This could allow hobbyist to achieve good sounding ambiences with minimal effort or technical knowledge and can be an educational tool for children to understand the make-up of our sonic environment.

I can imagine ways one could expand upon my concept if more time and expertise could be devoted to it. Perhaps it could be made into a Virtual Instrument, with automation and integration into modern DAWs and a simple way for users to integrate their own sound libraries. Or into a tablet app, where all sounds are placed and tweaked using one big multitouch X/Y area. And maybe it can be expanded to have binaural or multi-channel implementation, where sounds can be placed around you in any direction.

Ultimately though, one of the main things I’ve learned from this project is the liberating power of DSP. This was only my second attempt at making something in Max/MSP, but with a little work and aid from the various online resources (of which there are plentiful!) some unique and interesting results can be achieved. And the road to creation is both insightful and rewarding.

Special thanks to Andreas for putting this article together. You can find him on Twitter @dreasjonsson and at his site: http://sites.google.com/site/ajonssonportfolio/

We’re always open to guest contributions on the site. If you have something you’d like to share with the rest of the community, contact shaun [at] designingsound [dot] org.

Very cool article. Would love a follow up with a more in-depth look at some of the modules.

That’s a very nice project. I think you made a lot of greet design decisions.

Thanks for the kind words. I am planning to make the patcher available for download via the Cycling74 website at some point soon, it just needs a few tweaks. So if you’re interested in learning more about the implementation, that’s probably a good start.

Such a great article, especially liked the ambiance designer you made. Overall the article was easy to understand and follow whilst still maintaining a great level of detail and complexity.

Hey, where can we download/copy the patch to use in MAX?

is it possible to download it ?

Would it be possible to download the ambient designer? thanks a lot

would it be possible to download the Ambience designer patch for pure data?