In the run-up to this month's reverb theme, former contributor Damian Kastbauer suggested we re-run this article he put together discussing the game Crackdown for XBOX. The article may be two years old, but the content remains undeniably relevant. Never one to ignore good suggestions, here we are... One area that has been gaining ground since the early days of EAX on the PC … [Read more...]

Audio Implementation Greats #11: Marrying Toolsets

It may be premature for me to turn the focus of the series towards the future, as we find ourselves deep in the throes of the current generation console development, but I think by now those of us submerged in creating ever-expanding soundscapes for games at times suffer under the burden of our limitations. Of course, it isn't all bad, given a set of constraints and creatively … [Read more...]

Audio Implementation Greats #10: Made for the Metronome

[Written by Damian Kastbauer for Designing Sound] If you talk to anyone in game audio today about successful tempo synced synergy between music and sound effects it wont take long for your discussion to end up at REZ and the work of Tetsuya Mizuguchi. The quintessential poster boy for synesthesia in video games and a stunning example of overt spontaneous interactive music … [Read more...]

Audio Implementation Greats #9: Ghost Recon Advanced Warfighter 2 Multiplayer: Dynamic Wind System

When embarking on a sequel to one of the premier tactical shooters of the current generation, the Audio team at Red Storm Entertainment, A division of Ubisoft Entertainment, knew that they needed to continue shaping the player experience by further conveying the impression of a reactive and tangible world in Ghost Recon Advanced Warfighter 2 Multiplayer. With a constant focus … [Read more...]

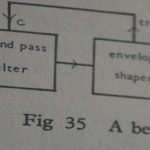

Audio Implementation Greats #8: Procedural Audio Now

This months Audio Implementation Greats series returns with the overarching goal of broadening the understanding of Procedural Audio and how it can be used to further interactivity in game audio. While not specifically directed at any one game or technology, the potential of procedural technology in games has been gaining traction lately with high profile uses such as the Spore … [Read more...]

Audio Implementation Greats #7: Physics Audio [Part 2]

In Part One we took a look at some of the fundamentals involved with orchestrating the sounds of destruction. We continue with another physics system design presented at last years Austin Game Developers Conference and then take a brief look towards where these techniques may be headed. UNLEASH THE KRAKEN In Star Wars: The Force Unleashed we were working with two physics … [Read more...]

Audio Implementation Greats #6: Physics Audio [Part 1]

In part one of a two part series on physic sounds in games we'll look at some of the fundamental considerations when designing a system to play back different types of physics sounds. With the help of Kate Nelson from Volition, we'll dig deeper into the way Red Faction Guerrilla handed the needs of their GeoMod 2.0 destruction system and peek behind the curtain of their … [Read more...]