By Karen Collins

Adapted from a forthcoming article in Animation: An Interdisciplinary Journal

An often overlooked aspect of sound design is the use of sound to create a sense of identification for the audience. Just as with using point-of-view with camera angles, sound can be used to create an auditory position for the listener/audience, putting them “there” in the space, creating an emotional response and empathy, or distancing them from the action.

Auditory perspective is constructed by a variety of techniques that create or reinforce the physical sense of space for the listener through the use of spatialized sound. These techniques combine physical acoustics with psychoacoustics (the perceptual aspects of our response to sound). For example, the perceived location of a sound can appear to emanate from between two loudspeakers, in what is referred to as a “phantom image”. The techniques commonly used to create and reinforce a sense of acoustic space for the listener including microphone placement, loudspeaker placement, and digital signal processing effects.

A number of acoustic properties and effects determine the ways in which sound propagates in space. For an introduction to sound propagation, see Sound Propagation in Games and Reverb: The Science And The State-of-the-Art). Reverberation is the most important when it comes to determining a physical space through sound. When a sound wave meets a new surface boundary of a material, some energy is absorbed and some is reflected. The material’s surface structure impacts the absorption as well as direction or angle of the reflection. The more reflective the surface walls of a room, the more reverberant the space will be, as the reflections bounce off the wall until their energy is all absorbed. Sound will reflect off the surfaces, and with each reflection there is a change in timbre and amplitude (as some frequencies are absorbed and others are reflected). Humans can “calibrate” the approximate size of a room subconsciously within just a few seconds of entering the space. In other words, the reverberation patterns of sound can play a significant role in helping to construct the illusion of a three-dimensional space.

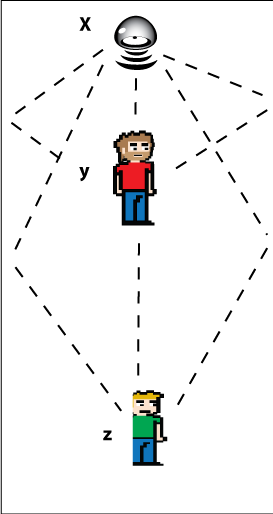

By using reverberation effects on sounds, we can mimic a real, physical space, and put the listener in that space. Depending on where a person stands in a room, the reflection patterns will be different, so using reverberation can “locate” the person in the scene. Direct sounds are sounds that arrive at a listener’s ears directly, without any reflections off surfaces, whereas reflected sounds are the reverberations of that sound off objects in the physical space, which creates a short delay and colours the sound. For example, imagine a listener in a cathedral, with someone speaking at the front, at point x in Figure 1. The listener at point y would be able to hear the speaker quite clearly, primarily receiving the direct signal, although would still hear some of the reverberation. The listener at point z, however, would hear much more of the reverberation over the direct signal. Anyone who has stood at the rear of an old cathedral would have experienced the difficulty with the clarity of the speech, being obscured by the reverberations.

Microphone Placement

This sense of distance and space can be created in part through the use of microphone placement. Auditory perspective can be created in part by carefully positioning the microphone to blend direct and reflected sound. The degree of loudness gives the illusion of proximity from the sound emitter to the listener (perceptually located at the microphone). As with spatial sound patterns discussed above, microphone distance affects not just the loudness, but also the timbre of the sound source.

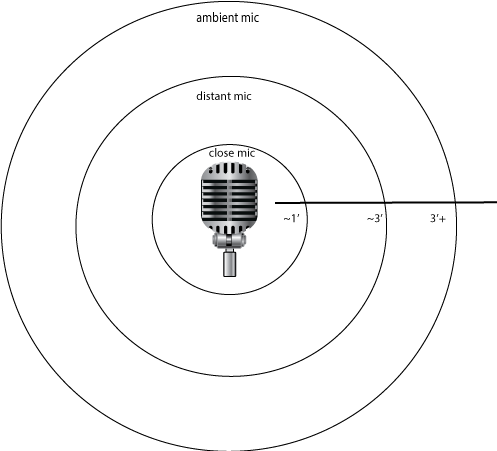

When the microphone is at a distance from the emitter of up to about one foot, it is considered close miking, which emphasises the direct signal over the reflected signals. Distant miking, on the other hand, places microphones at several feet from the sound emitter, usually capturing as much of the sound of the reflections as of the direct sound. Even farther from the source, ambient miking allows the room signal to dominate over the direct signal, for instance capturing the sounds of a crowd at a concert (Figure 2). In this way, microphone placement plays a role in our perception of the location of sounds in relation to ourselves, and of other objects that may obscure that sound in the environment.

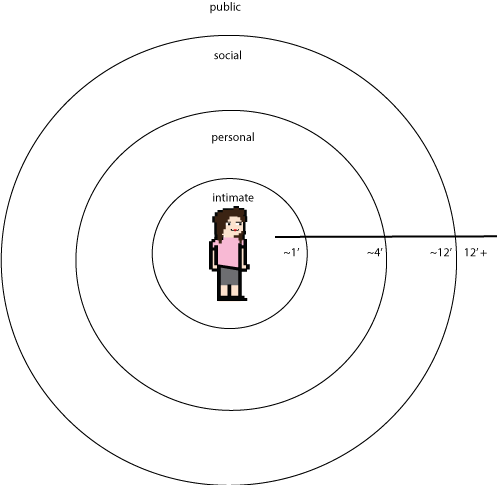

Important to the sense of identification with characters is the use of auditory proxemics (personal space). According to Edward T. Hall, we maintain social distances that can be divided into roughly four zones (Figure 3).

The closest space to our bodies, at a range of less than 18 inches, is our intimate zone, where we touch, embrace, or whisper. Beyond this is our personal space, up to a distance of about 4 feet, generally reserved for family and close friends. The social distance for acquaintances extends as far as 12 feet, and finally we have a public distance beyond the social zone. These distances are culturally specific, but for the most part are accurate for Western audiences.

The distance of a microphone can be analogous to our proxemic zones. In other words, the loudness of a voice can convey a degree of intimacy to us (as the character being spoken to in a film). Close-miking, for instance, can give the illusion that we are more intimate and closer to the speaker than if the microphone is at a distance from the speaker’s mouth when recorded. Feelings of intimacy can thus be created by whispering or close-miking, whereas a social or psychological distancing effect can be created by distance miking. Thus, the hearing of sighs or soft breathing is often used to facilitate a poignant emotional connection.

Microphone placement and reverb, in other words, creates a sense of physical distance from objects to the protagonist, but also creates a sense of the emotional distance and relationships between the protagonist and the other characters.

Likewise, the spatial positioning of sound effects around us using loudspeaker (or stereo/headphone) positioning (mixing), also helps to represent the sonic environment of the visualised space, and can extend beyond the screen into the off-screen space. Sounds can appear to emanate from a physical place around us using the physical positioning of loudspeakers or panning and phantom imaging techniques. As with microphone techniques, the use of loudspeaker mixing techniques can create both a subjective as well as a physical spatial position. The selection of how to mix sounds in the speakers, in other words, can help the audience to identify with the character.

Digital Signal Processing Effects and the Mix

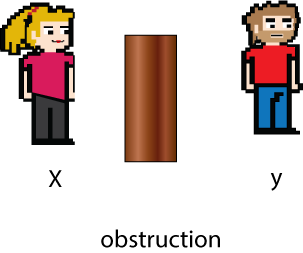

Another means to create the sense of environmental space—and thus our location in that space—is through digital signal processing effects, including reverberation (as discussed above), and various filters. The use of filters to mimic sound propagation effects can help to create a sense of the physical, environmental space in which sounds take place. For instance, when a sound emitter is completely behind an obstruction such as a wall that separates the emitter (at point x) from listener (at point y), the sound is occluded (Figure 4). A simple analogy is to imagine closing a window to neighbour noise—the sound can still be heard, although the overall volume is attenuated and certain frequencies are significantly reduced. To create this effect sonically, then, typically, the overall volume will be reduced and a low-pass filter will be applied. A low-pass filter sets a cut-off frequency above which the sound signal is attenuated or removed, allowing the lower frequencies to “pass through”, while cutting off the higher frequencies. The degree of low-pass filter applied (the settings controlling the intensity of volume attenuation and the amount of frequencies cut off) depends on the type and thickness of the material or object between the emitter and receiver. Both the direct path of the sound and any reverberations in the space are therefore “muffled” by attenuation and the filter.

If the direct path between the emitter at x and the listener at y is blocked, but the reverberation angles are not blocked, the sound is said to be obstructed (Figure 5). Here, the direct signal is muffled through attenuation and filters, but the reverberations/reflections of the signal arrive clearly at the listener.

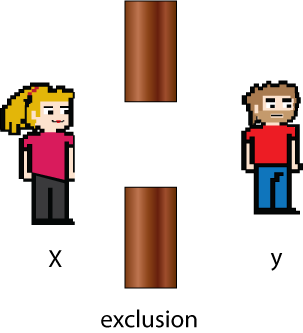

Finally, if the direct path of the sound signal is clear but there is an obstruction between the reverberations from the sonic emitter at point x and the listener at point y, the sound is said to be excluded (Figure 6). The direct signal is clear, but the reflections are attenuated and treated with a low-pass filter.

Such filters can also, of course, be used to create subjective distances, such as in a scene, where, for instance, a character is stuck inside a vehicle sinking in a lake. A low-pass filter placed on the character’s sound not only illustrates their physical position in space, but reinforces the sense that they are separated from us (and their escape), whereas the absence of a filter would place us “in the car” with the character.

Conclusions

I have outlined here a variety of means of creating the auditory perspective that combine the spatial sense and the subjective sense, including microphone placement, positioning in the loudspeaker, and digital signal processing effects. The auditory perspective can, as with camera angle, allow us to experience the fictional world through the body of a character, thus enabling identification with that character. The techniques described transpose and recreate the fictional space into our own physical space, helping to extend the world on the screen into our own world. By using effects, we can create an important sense of audience identification and perspective.

Special thanks to Karen Collins for contributing this article. Figure graphics from Vecteezy.com

Great article on how the sound of our world around us can be re-created. Two important considerations would be how the ear responds to real-life sounds, poor low and high frequency response, how sound behaves with distance, less low and high frequency energy and how this all relates to microphone polar pattern. Proximity effect (or lack of it) can skew the perspective. Secondly compression can also skew the perspective so use carefully.

As an ear-training exercise I’d encourage everyone to listen to a (stereo) mic feed with closed-back headphones whilst wandering between different types of audio spaces, a trip from your bedroom to the kitchen via the bathroom for example will help you appreciate just how much sound can change.

Great article. Physical as well as emotional perspective and distance often tend to be overlooked.

There is a great scene to illustrate this point in “Citizen Kane”. Susan Alexander, then wife of Charles Foster Kane is making a puzzle on the floor of a large hall in the mansion. When Kane enters, they have a discussion from opposite corners of the room and I’d dare to say that even more than the haunting music and the visual perspective, it is the amount of reverberation in their voices that illustrates the emotional distance between them.

Some people may say that this was accidental and just a consequence of the choice of location. But very few things in filmmaking are accidental. And based on the following passage from Bordwell and Thompson about Citizen Kane, most of these doubts could easily be dispelled:

“Citizen Kane, for example, offers a wide range of sound manipulations. Echo chambers alter timbre and volume. (…) Moreover, in Citizen Kane, the plot’s shifts between times and places are covered by continuing a sound thread and varying the basic acoustics.”

[Bordwell, David and Thompson, Kristin, “Film Art: An Introduction”, 8th ed., University of Wisconsin, McGraw-Hill, New York, 2008, pg. 268]

For additional bibliographical information on this subject, I also suggest having a look at Van Leeuwen, Theo, “Speech, music, sound”, Macmillan Press Limited, London, 1999, pg. 12 .

Thanks again Karen and Jack for sharing this article with us. I’ll share it with my students right now! :)