Yeah! Another of my favorite video games of the last year. Mass Effect 2 offers an impressive sci-fi adventure, full of action, detail and outstanding sound. The game was originally launched one year ago for Xbox 360 and Windows, but a few days ago it was released for PS3, so it’s a perfect moment to share with you this interview I had with several guys from the audio team of Mass Effect at BioWare.

Interviewees are:

- Rob Blake (Audio Lead)

- Joel Green (Sound Designer)

- Jordan Ivey (Sound Designer)

- Jeremie Voillot (Sound Designer)

- Mike Kent (Sound Designer)

- Real Cardinal (Sound Designer)

- Steve Bigras (Sound Designer)

- Terry Fairfield (Sound Designer)

Designing Sound: What was the objective on making a sound for the sequel of Mass Effect? What are the new challenges on Mass Effect 2 compared to the first title?

Rob Blake: We wanted to build on the great foundations of Mass Effect 1, adding more sonic development and feedback for the player. RPG’s are all about customization and progression so we wanted to add more depth to the sound systems. We also wanted to increase the clarity and definition of the sounds, but retain consistency with the Mass Effect universe.

BioWare understands the importance of sound so we have a lot of support from the team; it’s very much a collaborative effort rather than audio being a support team outside of the main development team. So in order to make many of the sonic improvements we worked very closely with other departments, sometimes integrating ourselves directly into other teams.

Mass Effect 2 is a much darker and grittier game than the first so it was a challenge for us to nail that different feel whilst retaining consistency with the first, but really the biggest challenges were regarding the fundamental changes to the tech and team over ME1, which I’ll get into later :)

DS: How was the development process? How long did the whole process take? How was the team made up?

Rob Blake: Mass Effect 2 was not a sequel from an audio perspective. We changed our entire audio system from ISACT over to Audiokinetic’s Wwise and therefore all of the assets, tech, tools and systems had to be re-implemented. We took this opportunity to rework pretty much all of the sound in the game; from the powers and weapons to the music system and VO. It was a pretty huge undertaking and I spent the first year on my own setting up the foundations for the project before the audio team came on board for the final 8 months of production. As BioWare’s first full Wwise project there were many unknowns when I started development and many of the team (including myself) were new to BioWare, plus our schedule was pretty aggressive so we had to get things right the first time.

We had a large team; with myself as the Audio Lead, maxing out at nine in-house sound designers (who’ll answer some of the later questions), our audio producer Marwan Audeh, a couple of audio programmers and some audio QA. This team size was necessary to complete the 60 levels, 150 cinematics, 20,000 conversation animations, 32,000 lines of VO and approximately 40 hours of gameplay to the level of quality we wanted to reach. The large scale RPG’s that we make at BioWare require teams this size to hit decent quality without taken years to complete, but we’re still less than ten percent of the overall team size.

The work was split up by ‘department’, with sound designers assigned to different areas to maximize ownership and responsibility. Due to the large nature of our games we tend to keep people focused on specific areas for each project, but make sure they never get pigeon-holed in an area for multiple games. Keeping people happy is really important to making sure they do good work, so we always strive to put people on things they want.

DS: What were some of the tools used to create and mix the sounds on the game?

Rob Blake: Our primary tools are the usual suspects; Pro Tools, Soundforge, Waves plug-ins and Native Instruments Komplete, but we also have various others tools; Camel Audio’s Alchemy is a big favorite with the team, as is Albeton Live. Each sound designer also has a couple of computers and a 5.1 Genelec system. We also have a couple of sound recorders and plenty of mics, we try to do as much recording as possible… despite the chilly Edmonton winter ☺

DS: Could you share some info about the sound design/editing on the cinematic animations?

Mike Kent: For the cinematics on Mass Effect 2 we decided to run it like a post house would. We had a sound designer assigned to creatures, a sound designer assigned to vehicles and backgrounds, one guy on Foley and another on hard FX. We had a priority list of which cinematics were to be done first and each sound designer would work on their scheduled bit for the cinematics at hand. Once all the pieces for the cinematics where finished, it would then go to the mixer who would start to mix all of the elements together. After the mix was approved we then had to break the cinematics up into smaller bits to ensure sync.

The cinematics where broken up into sections of in-game portions and Bink portions. There could be any number of combinations of followers in your party and the player could make choices in between cutscenes that could affect the outcome of the scene so we had to break these scenes down to small chunks.

DS: And what about the mix of those cinematics?

Mike Kent: After getting all of the stems from the other designers you have to start looking at the scene objectively and determine what sounds are needed to tell the story for that scene. There was a lot of omitting of sounds, to focus on the sounds that were important to tell the story. Every scene had to fit with the in game assets so we did a lot of pre-work to insure this was achieved.

Rob Blake: We streamed the music and sound effects for the cinematics separately. This meant that during complicated cinematic sequences made up of multiple scenes we could play a single music track over the whole thing to keep musical flow while having the sound effects as multiple chunks to ensure perfect sync. It also meant that we could mix the music and sound design separately at runtime.

DS: How did you approach the sound for the final boss?

Joel Green: We split the end boss audio into three categories: movement, weapons, and voice. For the movement sounds, many layers of servos and hydraulics were cut to each of the boss’ animations. The key to making this work was running the whole bus through an automated formant filter. This melded the layers into a more cohesive sound that felt like a single entity rather than an army of little robots. It also added a much needed organic element to the sound, bringing it closer to the fiction. The weapons were created using a big nasty fog horn as their base element. That tone cuts through any mix, and has a natural “gigantic machine approaching” feel. For the voice, the same horn was time-stretched until it was very granular and synthetic sounding, then run through the formant filter to give it a human vocal quality.

DS: Could you describe how you made some of the creature sounds?

Terry Fairfield: Our target for creatures was to avoid having the ‘animal sandwich’ effect of many layered creature samples and create unique, coherent sounds. To this end vocalizations were performed by sound designers then edited and processed to give appropriate size / mouth etc. More robotic or synthetic vocalizations were synth performances edited down to match the appropriate state or animation.

To hook up the sounds we had all available AI states and could script a creature’s reactivity as well as attaching sounds to animations. Adding exertions to animations added a scary and visceral quality to close range attackers such as the zombie-robot husks while tunable, distance-based triggers let the player know that a stealthier attacker was near.

DS: There are really great sounds on the biotic powers and weapons… How were they created?

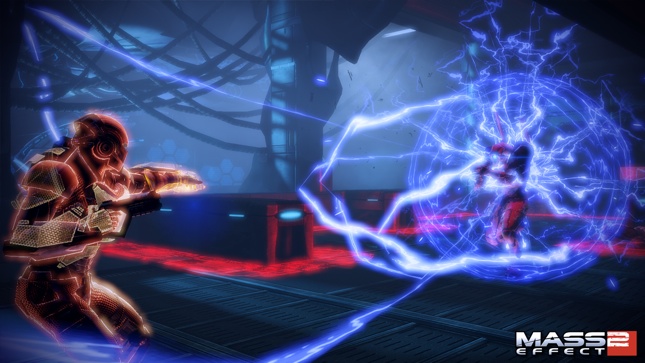

Real Cardinal: The Biotic sounds were created almost completely from custom synthetic source sounds. We wanted to achieve a softer and more tonal sound, but still with enough impact to cut through the mix.

The bulk of the tonal elements were created with standard FM and subtractive synthesis. I spent a bit of time setting up patches that were very ‘playable’ with a keyboard modwheel and knobs, to create variation. A lot of the tonal shifts came from careful modulation of the synthesis parameters.

I then recorded long performance sessions directly in to Protools and Ableton Live along to some of the visual effect prototypes, trying to get the sounds to be as gestural and alive sounding as I could.

I started stacking and mixing the sounds once I had a large enough palette. A lot of the character of the sound came from drastically stretching and pitching the synthetic sounds. Since the source sounds were recorded at 96k and there was very little noise elements in them, I was able to very drastically alter and pitch the sounds to get character without losing much high end or smearing the mids with aliasing.

We used a low pass filter duck in game on all environmental sounds for the softer ‘space distortion’ type biotic impacts and visual effects. From a technical perspective, these sounds don’t inherently cut through a mix, so opening some space in the spectrum upon impact helps with that. Since Biotic powers are space and gravity distortion effects, from a creative perspective we wanted it to sound like the biotic effects were distorting the environment. A good (but subtle) example of this is when the player casts the ‘Throw’ Biotic; there is a quick low pass filter duck on the blur ripple visual effect that comes from the player.

Mike Kent: The main gun sounds were created using a combination of granular synthesis, spectral smearing, real guns, and indy car recordings. The biggest things we added in the end where steam release when your gun used up its thermal clip and also an ammo sound that pitch up when you were almost out of thermal clips. This helped out the gameplay by giving the player audio feedback when you where running out of ammo.

DS: How were the implementation and interactive mixing processes done?

Rob Blake: We did our own custom integration of Wwise into Unreal, so I worked closely with our audio programmer (David Streat) to design all the tools we’d use in Unreal. Things like sound emitters, audio volumes, kismet actions, the music system, Foley system, the GUI system, etc… all had to be redesigned to work with Wwise. I won’t go into the boring details as to how all of these work, but suffice to say they were built to be as easy and quick to use as possible, but with the flexibility to let us cater for all the crazy things the designers were doing in the levels.

I designed the structure of our Wwise project to be built specifically for a large team, so we ended up with a huge amount of Wwise work units (over 300 in the actor-mixer hierarchy), but ultimately this meant that we had almost no conflicts. I found that separating work units by ‘discipline’ and having as much consistency across all areas of Wwise made navigating our content much easier. Considering it was our first Wwise project our experience was incredibly positive and we’re sticking with it for the future.

The version of Wwise we shipped with didn’t have much in the way of dynamic mixing (it’s considerably better now) and my early investigations into states had not proved to be the direction I wanted to go in, so I ended up not using any states in ME2. Instead we used a mixture of RTPC’s, ‘set’ actions and Wwise’s ducking system. It worked pretty well considering how complex it was, however dynamic mixing is definitely one of the areas we’ll be focusing on for ME3 to create a cleaner and more focused mix, especially now that there’s a ‘meter’ plug in!

DS: How did you approach foley in the game? What kind of special props/sources were recorded?

Jeremie Voillot: We wanted to record a lot of original sound for ME2, and Foley was definitely no exception. In order to simulate the futuristic materials in the game we looked to various forms of sporting equipment. We found that protective gear such as motorcycle body armor, hockey shoulder pads and old school ski boots gave us the nice ‘clacky’ feel that matched the armor types in the game. We also recorded from a near and far perspective, mixing between the two during perspective changes in game. This smoothed out the transitions in a nice manner. The microphones used were a Shoeps CMIT 5U as our distant (6ft), and a Nuemann KMR 81i as our close (2.5ft).

DS: Can you detail the work that went into the environmental sounds and any challenges you faced?

Jordan Ivey: With so many different planets and races, one of the most important factors is to really give each environment its own sense of identity. You have to consider the history of both the dominant species and general wildlife as well as technology, weaponry, vehicles, and any other aspect that may influence the ambience of the world. To come up with so many variations was a great challenge, but also a lot of fun. The tech and industry sounds were probably the largest group of sounds, but with such varied and detailed races it was a great source of inspiration. For example, the Krogans are a large, brute race of warriors, but not the most technologically advanced. The approach to their home world was more sparse and dirty. Older machinery, loud hissing pipes, and a general darker tonal element than say the Quarians, who come from a more technologically advanced background. Sleek GUI’s, cleaner ambiences and a less ‘noisy’ atmosphere helped to contrast these two races.

This was also the approach taken with the cities and hubs in Mass Effect 2. Omega is a dark place; it’s full of thieves and freelance killers, and run far outside of the law. The idea with a city like this was naturally to give it a lot more grit. Hearing dark heavy music playing through walls, arguments and gunshots heard off in the distance, smashing bottles and shady dealers all added to its dark setting. A place like the Citadel was a polar opposite though. Lots of bright shimmering tones that came from the many advertisements found around the hub, as well as some nicer music for the shops and vendors were some of the things that helped to give the Citadel a more welcoming, pleasant tone.

DS: There are a huge amount of conversations in the game, how did you created the audio for them all?

Steve Bigras: It was a combination of reusing in-game assets and custom assets. We had a megabyte per level of custom audio for cinematic design, so having that number in mind I would go about making sure everything was hit to the BioWare level of awesomeness. Having very little Foley coverage in Mass Effect 1’s conversations, we aimed for an ambitious ‘cover everything’ mentality for ME2. Mass Effect’s conversations animations are individually custom made so we have to hit each one.

As a fresh BioWarian, cutting the equivalent of a 30 hour movie in about 8 months was a big undertaking. The only way this was possible was to work very closely with the conversation team. I had two offices set up; one in the cinematic design ‘pit’ and the other in the sound department where I could listen to the game in 5.1 and create audio assets. They ensured I was kept in the loop on any changes affecting audio which eliminated the majority of surprises. As I was integrated closely into the team and involved with all the reviews I was able to give feedback from an audio perspective in the early stages. This allowed me to add to and support the story rather than be purely reactive to the visuals. Establishing that close interaction allowed us to really add depth to the story and make it as engaging for the player as possible.

DS: Did you found any limitations on the technical side? What would you like to have available for developing sound in different platforms?

Rob Blake: Wwise was absolutely awesome to work with and the tools we built into Unreal also worked incredibly well, so we were pretty happy. We are almost at a point now where the technology is good enough that we can do whatever we want and we should be focusing more on the creative rather than the technical.

That said there are a few areas that were challenging such as the extreme amount of disc streaming we were doing which meant we were limited in the amount of audio we could stream. Also, we’ve developed an incredibly complex VO importing system that buildings all the audio content, makes ‘synth voices’ if real VO doesn’t exist and updates all the lipsyncing. However, this system would sometimes take a while to process the content when we had big VO deliveries which sometimes slowed us down. Again, we’ve already made significant improvements to this for ME3.

DS: Can you give us any information about Mass Effect 3?

Rob Blake: I’d love to! But I can’t ☺ What I can say from an audio perspective is that because we’re not switching audio engines like we did on ME2 we’ll have much more time and stability to focus on the awesome content; we’ve got some amazing things lined up for audio on ME3.

It’s going to be an amazing project and we’re all incredibly excited to be working on it, I can’t wait to see how people react to it!

Feel free to drop in on the Mass Effect forums if you have any questions, I’m often lurking around there ☺

Thank you so much to DS and for everyone involved in this interview. Mass Effect 1 & 2 are two of my favorite games both in current-generation and possibly of all time.

ME2 in particular audio-wise it was a treat, and always was that extra bit of sweetness to the game. Stepping into Club Lux for the first time in ME2 was just one of those great moments where you’re just completely loving the experience of the music pumping, and all the people bustling all around you.

It’s indescribable how excited I am for the Mass Effect 3, and I hope it brings some extra geeky audio goodness that’ll give me the same (and hopefully better) feelings and experiences I had with the previous two games.

Keep up the good work.

I see a total of seven sound designers in this list, along with an audio lead. Are those all in house, or are some of them contractors? If no, its refreshing to see a group take Sound Design as seriously as to have seven on staff. Great work as always I am sure, Bioware is a group that all of us watch (and listen to) very carefully.

Jeremie, great job on the foley! Very much enjoyed the series so far and cannot wait for NR 3! ;-)

great interview & a great game

The character’s voices in ME2 are some of the best Ive heard (along with dragon age)

i would also be interested to know if that is 7 full time sound designers for the whole length of the project!

Thanks all. Everyone in this interview is a full time employee. My coworkers are all talented mofos! It IS a lot of people to have on a project, but we really needed that many people. Not only to cover the amount of content (which is staggering on a game like this), but to keep the quality high and immersive throughout the game (as Rob Mentioned).

yes, great game, great audio and a really good read guys. Congrats to the dev team.

And as noted by others, Voice acting added a lot to this game.