Gamasutra has published another amazing featured article for audio, with Rob Bridgett, who examines the key issues and possible solutions to common problems in game dialogue production. Dialogue production for a large budget, cinematic video game can often be an intense and often brutally challenging process. Getting an actor in the booth and reading a script is in itself a … [Read more...]

Archives for October 2009

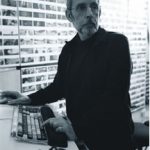

Walter Murch Special: Interviews

The Walter Murch Special ends here, a very interesting journey through the articles of one of the most important men in the history of audio and video creation. November will be a great month for the blog... If you were hoping for game audio articles, you'll love the November Special! Finally, let's read a nice round of interviews of Walter Murch selected form several … [Read more...]

More than 50 Articles/Tutorials about Sound Design, Recording and more, Plus Wooshes Sound Design

The woosh, a sound effect used very often out there. Did you know everything about them? In this tutorial, Tim Prebble, from Music of Sound explains how to record and process wooshes. The (semi/un) technical term is ‘Woosh’, or maybe ‘Whoosh’ if you are American – i’m not so I don’t know, but hey i’ve seen a few files labelled as such, so call them whatever you like but they … [Read more...]

Walter Murch Special: K-19: The Widowmaker

The history of the disaster of the sovietic nuclear-submarine K-19 presented in theaters, with K-19: The Widowmaker, an independent film that cost $100,000,000 to make. It's about the disaster of the sovietic nuclear-submarine K-19. An interesting film with a lot of work of foley and impressive recording.. Walter Murch worked as re-recording mixer. Let's read this article on … [Read more...]

Language Design Techniques for Sound Designers

Language Design for Sound Designers, an amazing article created by Darren Blondin. I read it last year, but found it again while reviewing my bookmarks. I got the idea of sharing it on Twitter and I noticed that various people didn't know about that (and they really liked it!), so for those who don't use Twitter or don't follow Designing Sound @ Twitter, I decided to make this … [Read more...]

Notes of the Star Trek MPSE Sound Show

Last Tuesday (October 20), Motion Picture Sound Editors presented a new Sound Show, an exploration of the sound of Star Trek, with the award winning Supervising Sound Editor Mark Stoeckinger (Mission: Impossible III, The Last Samurai) and Co-Supervising Sound Editor Alan Rankin (Windtalkers, The Amityville Horror). In the show they demostrated the process for the creation of … [Read more...]

Walter Murch Special: The Process of Transition and The Role Of Sound In The Image Interpretation

The Walter Murch Special continues! With more sound techniques and fantastic theories by the sound master Walter Murch. Let's check two artibles of Filmsound. The first one is an interview where he talks mainly about Transitions: At the basic level, a transition is simply the process of changing from some state A to another state, B. What we should examine carefully is the … [Read more...]